Artificial Intelligence technologies have come a long way in the last 3-5 years. But with each passing day comes a new achievement, especially in cognitive services, which is what I want to talk about today.

Within the capabilities of using artificial intelligence systems to solve complex problems, there is a whole branch dedicated to building models and technologies that solve the cognitive problems that we humans solve with relative ease in our intelligence engine – the brain – using our data input and output sensors: sight, voice, hearing, touch and taste.

It is these disciplines of vision, speech and listening that have been developing over the last few years at an incredible speed. And for one reason, that even we at Telefónica take as a guide:

“For many years humans have learned the interfaces of technology and it’s time for technology to learn the interfaces of humans”.

And of course, they have. There is a unit of measurement of the quality of Artificial Intelligence models for cognitive services that is called “human parity”. In other words, we use human parity as the error rate, so if a cognitive service is less wrong than a human in one of the problems, we say that service has already surpassed the human being.

Let’s imagine a telephone support center for the United States. Imagine now that we have a team of human beings with cognitive English speech recognition services for all citizens who speak that language in that country. The rate of times that team of humans fails to recognise a phrase from other humans is higher than we would have if we put an Artificial Intelligence to recognise them. Human parity in voice recognition in English was already surpassed in 2017.

Similar exercises have been going on over the past three years. It was as early as 2015 that cognitive machine vision services surpassed human parity in object recognition. And a little more than a year ago, in January 2018, human parity was surpassed in reading comprehension. Yes, you can give a text to an Artificial Intelligence and ask all kinds of questions that can be extracted from that text based on the information that exists in it and start asking it. Its error rate is lower than that of human beings.

In March 2018 human parity in translation was surpassed, and I suppose you are all excited to see the use of this capability on Skype so that in real time two girls from Mexico and the United States can communicate without a language barrier in between.

And this year another record has been broken. An Artificial Intelligence has surpassed human parity in lip-reading, as if in “2001: A space odyssey” would have seen it coming. The “poor” HAL 9000 has already been surpassed by the MIT Lab project “Alter Ego” https://www.media.mit.edu/projects/alterego/overview/.

And this is becoming massified and commoditised. For example, young people are no longer surprised by the machine vision systems that are used on SnapChat to put kitty ears on you, or that use video conferencing systems to blur the background. Or that people are recognised in photographs.

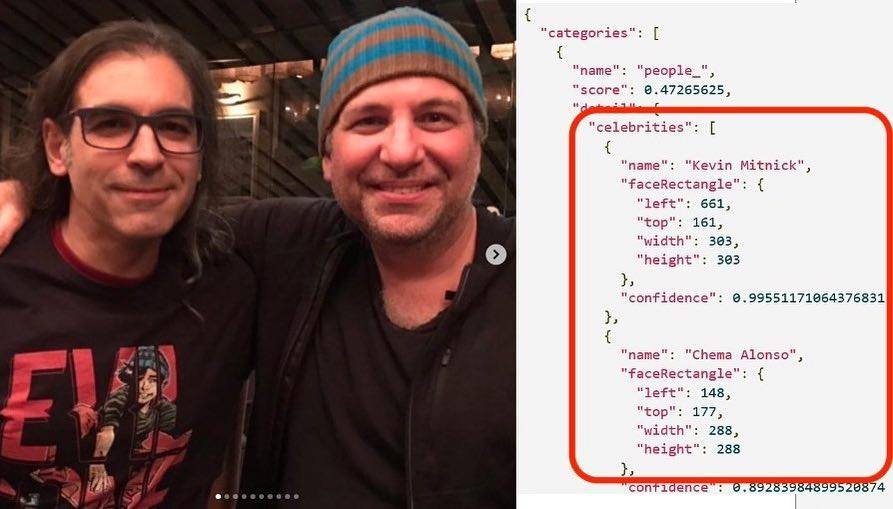

Figure: Photo of Chema Alonso and Kevin Mitnick recognised by Microsoft Azure

http://www.elladodelmal.com/2019/04/cuando-me-converti-en-una-celebritie.html

In the case of Microsoft Azure, there are machine vision services to recognise celebrities, and neither Kevin Mitnick himself nor I, changing his glasses for my hat, were able to fool them. And it’s only 2019. What will happen in 2030?

Whatever we imagine for 2030, it is clear that it must have Artificial Intelligence systems with cognitive services much superior to humans, and it will probably include things that seem to us as ours, such as “instinct”, “intuition” or “imagination”, which in the end have a little or a lot of predictive data analytics. The future is built – for now – by us, so as long as these Artificial Intelligences do not have dreams, let’s dream of a world in which technology makes us better humans.